From completion to consequence

When the surface area was mostly editors and pull requests, the failure modes were familiar: style nitpicks, wrong imports, tests that never ran. As soon as an agent can open tickets, tweak infrastructure, or touch customer data, the cost model changes. The question stops being "did it write decent TypeScript?" and becomes "did it know which system it was holding?"

That shift rewards platforms that treat context and permission as first-class—not bolt-on prompts pasted into a chat box.

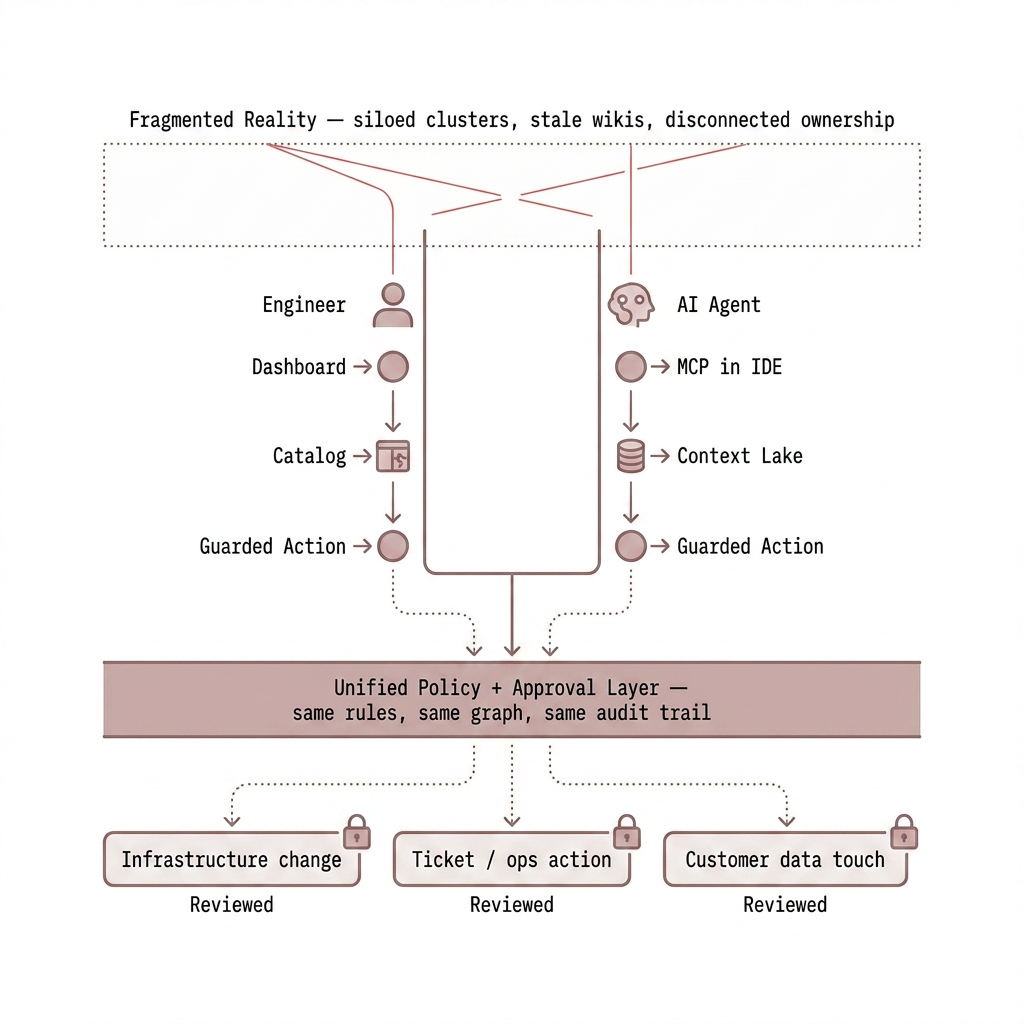

Fragmentation taxes humans—and models

Cloud-native teams already juggle clusters, pipelines, observability stores, access brokers, and ad hoc spreadsheets. Each silo holds a slice of truth: who owns a service, what depends on what, which changes are in flight. People bridge the gaps with meetings and muscle memory.

A model has no muscle memory. If ownership, topology, and policy live in disconnected systems—or worse, only in someone's head—automation will either refuse to act or improvise dangerously. The bottleneck is rarely raw model quality; it is missing, stale, or inconsistent ground truth.

What has to exist before you trust a loop

Useful autonomy needs three things working together: a durable picture of the estate (services, dependencies, environments), rules that say who may change what under which approvals, and a record humans can audit when something misfires. Skip any leg of that tripod and you get either paralysis or shadow IT with a prettier UI.

How we approach it at Exemplar

Exemplar is built around a single operational layer: catalog and integrations feed a Context Lake so questions and actions draw on the same graph-backed reality your teams maintain—not a one-off RAG dump. Agentic Assistant for Day 2 Ops exposes the same capabilities you get in the product to conversational surfaces and to MCP in the IDE, so policy does not fork by channel.

Shared substrate

Catalog, integrations, and context so agents and engineers reason over one map of services and dependencies—not parallel fictions.

Governed change

Policy and approvals apply whether a human clicks a button or an agent proposes an action—so "fast" does not mean "unreviewed."

Same tools everywhere

Dashboard, chat-style assistant, and MCP clients invoke the same guarded actions—reducing the class of bugs where the IDE can do something the console would have blocked.

The bar we are aiming for

The end state is not replacing engineers; it is removing swivel-chair work that machines can do safely when grounded in live context and explicit boundaries. Getting there is less about a hotter model and more about boring platform hygiene—then letting automation ride on top without improvising its own facts.

Editorial—general discussion only.